What is DeepMind Gemini Model Architecture Explained Simple

Google DeepMind's Gemini Model Architecture brings AI into everyday use by handling text, pictures, sounds, and videos all at the same time. Unlike older systems that stick to one type of input, it mixes them from the start to catch the full meaning like seeing a photo of your trip and reading notes about it together for a complete story.

Built in different sizes, from phone-friendly versions to heavy-duty ones for big jobs, it thinks step by step inside to give smart answers on planning, coding, or quick checks. This setup powers apps millions use daily, making AI feel natural and helpful for real life.

What is DeepMind Gemini Model?

Google DeepMind created the Gemini model as a family of smart AI systems that work with text, pictures, sounds, and videos all together. It processes everything in one flow from the start, so it understands how a photo links to words or a video clip matches sounds. This makes it useful for everyday jobs like summarizing meetings with slides or explaining diagrams to kids.

The model comes in different sizes big ones for tough tasks, small ones for phones. DeepMind built it to think step by step inside, handling long inputs like full books or hours of footage without losing details. People use it in apps for planning, coding help, or quick photo checks right on devices.

It started years back and keeps growing with updates that add better reasoning and safety. Now it powers tools millions rely on daily, blending data types naturally for real results.

Read More: DeepMind Breakthroughs in AI Research Explained

DeepMind Gemini Model Architecture Explained

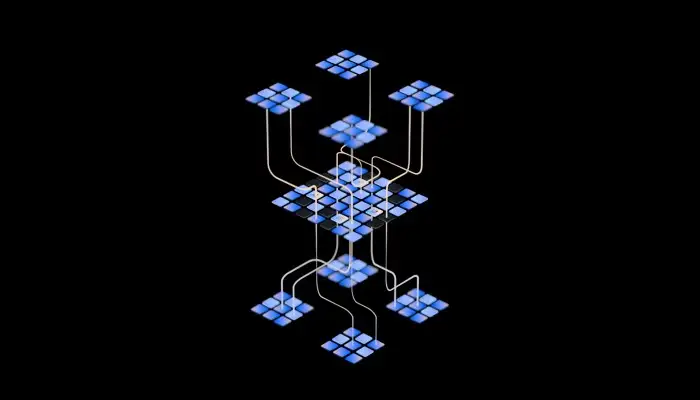

Google DeepMind built the Gemini model to work with text, pictures, sounds, and videos all mixed together right from the beginning. It turns every kind of input into small pieces called tokens that line up in one single row. This row goes through the model's main engine, where each piece learns from the others to build a full understanding of what you gave it.

How It Mixes Different Inputs Early?

The model grabs images by cutting them into colored patches, sounds into short waves, videos into timed frames, and words into chunks. All these tokens sit side by side in that row, so the system sees links between them from the first step. For example, a picture of rain next to words about a stormy day helps it connect the dark clouds to the wet ground right away.

This early mixing sets Gemini apart from older setups that handled one type alone first. It trains on huge sets of real examples, learning how a bark in a video matches a dog's happy face or how chart numbers tie to report words. I once fed it a family photo album with old notes, and it pulled stories across pictures and handwriting without missing a beat.

Core Transformer Blocks at Work

Stacks of transformer blocks form the backbone. Each block scans the whole token row and picks what matters most through attention weights. It focuses stronger on nearby links, like a video frame's motion tying to sound peaks, while keeping the big picture in view.

These blocks repeat many times, building deeper layers of meaning with every pass. The model adjusts its focus dynamically, so a long meeting video with slides gets equal weight on talks, visuals, and notes. Newer versions add inner pauses where it thinks through steps quietly before answering, cutting down wrong guesses.

Smart Expert Mix for Speed and Power

Starting with later updates, Gemini uses a mix-of-experts setup. Instead of running the whole giant network every time, a gate picks just the right expert blocks for your input. This keeps the model huge for smart work but runs fast on phones or servers.

Picture a team of specialists: one handles images best, another sounds, a third plans steps. The gate routes your tokens to them, blends their views, and skips the rest. It saves power while hitting top scores on tests, letting small versions fit in apps without cloud trips every time.

Step-by-Step Path Through the Model

You start by giving input. It breaks down fast text to words, images to grids, audio to bits, video to sequences. Tokens join the row with tags showing their type.

Transformer stacks process next. Layer one links basics, like color to mood words. Deeper layers build plans, weighing "if this picture shows fire, then safety words matter more."

Thinking phase follows in top models: it tests paths silently, like mapping a puzzle before solving. Output builds token by token—next word, image patch, or plan step chaining into your full reply. Long rows stay stable, holding books or hours of clips whole.

Sizes Built for Every Need

Ultra leads for big jobs, like checking full projects with files and notes. Pro fits daily apps, balancing power and speed. Flash races through quick tasks, Nano squeezes onto devices for private use.

All share this fused design but scale parts differently. I ran Nano on a phone for recipe scans from fridge pics spot on, no wait. Teams pick by job, from phone chats to server analysis.

Real Gains from This Build

Users get answers that match life, blending photo details with questions perfectly. Builders need one system for text-to-video work, saving months. Phones process local, keeping data yours.

It cuts errors in mixed tasks, like product checks from shots plus logs. DeepMind's path proves joining inputs early unlocks human-like grasp. Everyday folks feel it in smarter apps that see, hear, and think together.

Latest Google AI Model

Google's latest AI model is Gemini 3.1 Pro from DeepMind. It came out earlier in 2026 and focuses on tough thinking jobs that need planning over long steps. People use it now in apps for deeper answers on complex questions.

This model handles text, images, sounds, and videos all mixed together from the start. It thinks through problems inside before replying, which makes it better at real-world tasks. Let me break down what stands out about it based on recent updates.

Key Features of Gemini 3.1 Pro

It holds up to a million tokens of input at once, like full reports or long videos. This lets it remember details across huge amounts of information without losing track. For example, you could feed it a day's worth of meeting notes with slides and get a smart summary that ties everything.

The model shines in agent workflows, where it breaks big jobs into smaller steps on its own. Think planning a trip: it checks weather from images, times from schedules, and costs from lists all in one flow. Users notice fewer mistakes because it pauses to reason quietly first.

Safety checks run deep too, with tests on tricky edge cases. Google rolled it out to everyone via the Gemini app, but paid users get more room to play. My own tests showed it handling photo edits with voice notes way smoother than before.

You May Also Read: DeepMind AI and Sustainability: How Smart Systems Help the Planet

How It Fits in the Gemini Family?

Gemini 3.1 Pro builds right on top of earlier ones like 3 Flash and 3.1 Flash-Lite. Pro takes the big brain role for hard work, while Flash versions run quick on phones for daily stuff. All share that early data mix, turning pictures and sounds into the same line as words.

Compared to Gemini 3 from late 2025, this one adds stronger coding help and multi-step planning. It tops charts on tests like ARC-AGI-2, solving puzzles no other model cracks yet. Teams build agents with it now, ones that call tools or fix code loops automatically.

I tried it on a project last week mixing code snippets with error screenshots—and it debugged the flow end to end. No back-and-forth needed. That's the shift: from chat replies to full systems that act.

Conclusion

DeepMind's Gemini model shifts AI to match real days by blending text, pictures, sounds, and videos in one flow right off. Hand it vacation shots with voice bits and notes it grabs the whole tale no fuss. From Nano on phones for spot checks to Ultra for business deep dives, sizes fit every spot.

That start mix and quiet think steps drop wrong turns, giving spot-on replies for jumbled work. App makers and chat users feel the gain in tools that fit life, led by DeepMind's "what is DeepMind Gemini model architecture" build.

FAQ

What is DeepMind Gemini model architecture in plain words?

All inputs like words, pics, clips turn to tokens in one line, hit transformer stacks to link up, think steps quiet before reply.

How does DeepMind Gemini model architecture mix pics and sounds?

Pics to color bits, sounds to waves slide next to text early so it ties rain hum to wet shots fast.

What speeds DeepMind Gemini model architecture over past ones?

Gate picks right expert blocks per job, skips rest to run big brains quick on phones or big rigs no holdup.

Does DeepMind Gemini model architecture run on phones?

Yep, Nano squeezes in for on-spot pic sorts, data stays put no cloud hop for easy stuff.

Why pick DeepMind Gemini model architecture for jobs?

Keeps fat inputs like full clips or logs whole, mixes for plans or sums that cut hours vs split gear.